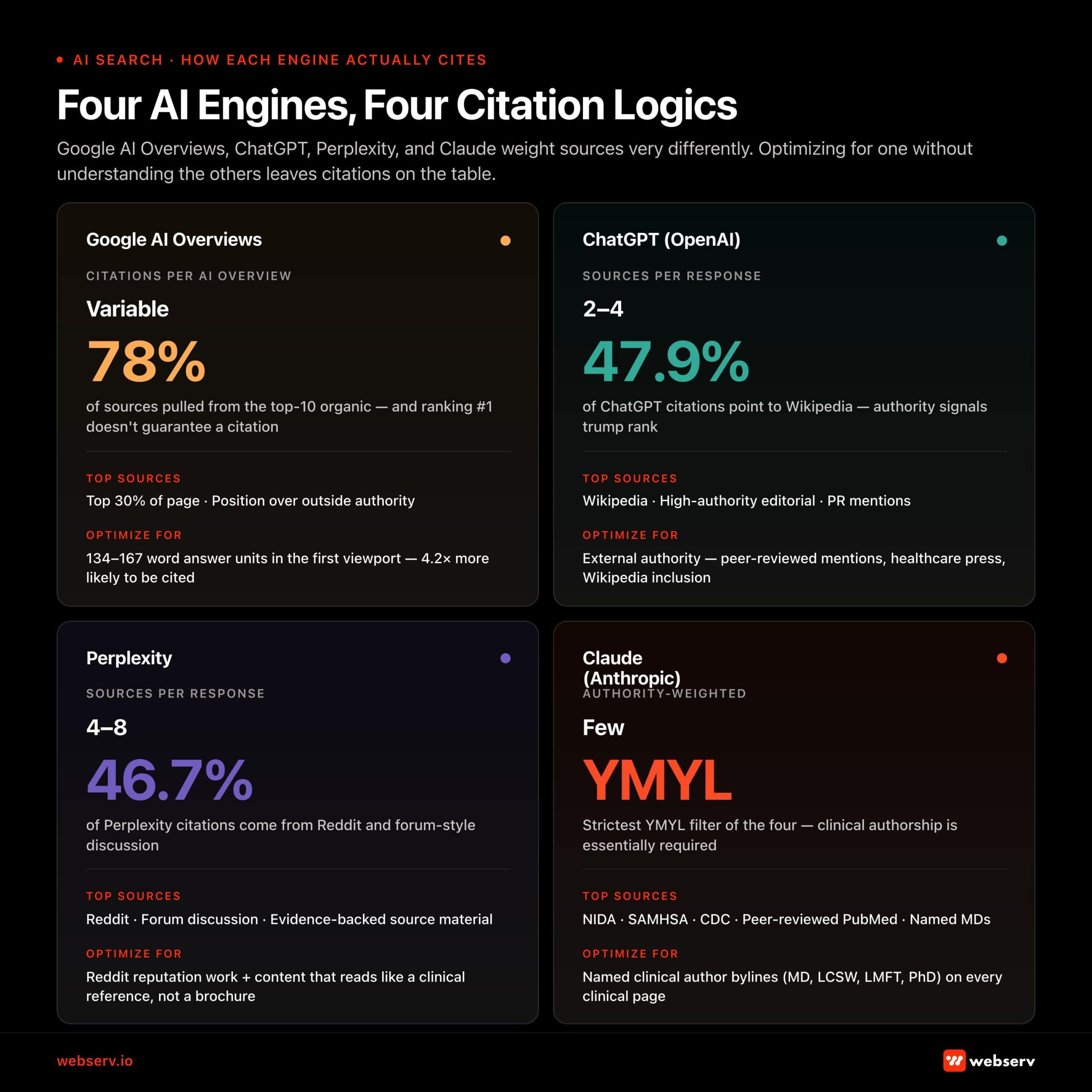

The discovery layer for behavioral health treatment has moved. AI search engines are now the front page of Google for high-intent treatment queries, and the rehab operators capturing citations in ChatGPT, Perplexity, Claude, and Google AI Overviews are taking share from operators who still optimize only for blue-link rankings.

Most agencies are still selling 2024-vintage AEO tactics: llms.txt files, schema audits, surface-level FAQ markup. Those tactics do not move citation share. Real AEO is a seven-layer playbook covering sentence structure, code structure, digital PR, video, social, reviews, and site structure, executed against a baseline of solid technical SEO and clinical-review editorial workflow.

This guide walks through what changed, what the seven layers actually look like in production, what success looks like at twelve weeks, and the diligence questions to ask any agency selling AEO services in 2026.

A treatment center we audited last quarter looked great on paper. Their Semrush AI tracking dashboard showed a “position 1” AI Overview citation for a competitive head term with meaningful monthly search volume. The marketing team was reporting it up the chain as a win.

We checked the live SERP. The “position 1” citation was buried behind a “load more” button two screens down inside the AI Overview.

A separate citation appeared inside a paperclip icon that collapsed by default. A user looking at the result page would never see either reference without deliberately clicking to expand the AI Overview.

The dashboard was technically accurate. The citation existed. The actual user experience was that the brand was effectively invisible. This is the gap most SEO programs for treatment centers are still pretending does not exist.

This is the 2026 reality of AI search for treatment centers. AI Overviews, ChatGPT, Perplexity, and Claude are rewriting the discovery layer for high-intent rehab queries. The citations are real. They are also hidden, mercurial, and routinely misreported by the tooling operators use to track them.

AI visibility is mercurial. Anyone who tells you they have cracked the code is lying. These platforms and features change weekly, and the marketing company you work with has to be able to roll with the punches and change alongside them.

Trevor Gage, Director of Earned & Owned Media, Webserv

Key Takeaways

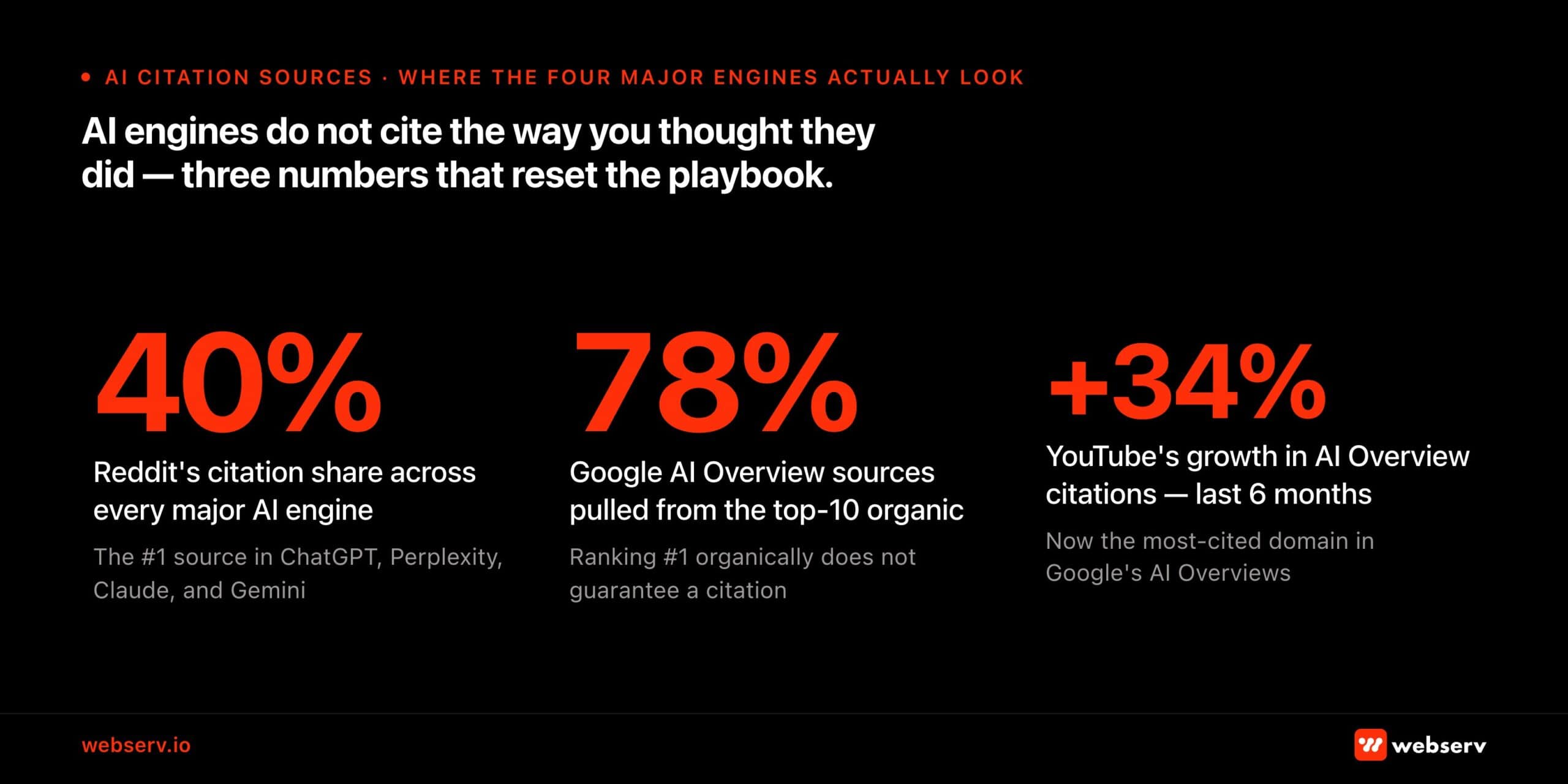

- AI Overviews, ChatGPT, Perplexity, and Claude pull citations using four distinct logics. Reddit is the #1 source across every major AI engine (~40% citation share). YouTube is now the most cited domain in AI Overviews, growing 34% in the last six months.

- Behavioral health is treated as YMYL by every major AI engine, which means named clinical authorship, evidence sourcing, and external authority citations are structural requirements, not nice-to-haves. Anonymous or promotional content is filtered out.

- The llms.txt pitch most agencies are still selling does not move citation share. Real AEO is a seven-layer playbook: sentence structure, code structure, digital PR, video, social, reviews, and site structure. The layers compound.

- Dashboard metrics routinely report “wins” that do not match user reality. Semrush “position 1” citations are frequently buried behind load-more buttons or paperclip expanders. Every claimed citation needs SERP-level verification before it gets reported.

- A 90-day playbook produces measurable lift. The Beverly Hills residential program in this guide ran a slightly extended version and produced a 384% mention increase across ChatGPT, Perplexity, Claude, and Gemini. The compounding window is open right now and closing as competitors invest.

This guide explains how Google AI Overviews, ChatGPT, Perplexity, and Claude actually select sources, why behavioral health is a special case under YMYL scrutiny, and the seven layers of real AI optimization for treatment center content.

It also covers the llms.txt distraction most agencies are still selling, what a citation lift looks like in practice, and a 90-day playbook treatment centers can run against this quarter.

What changed in 2026 (and why this guide is dated this quarter)

Four platform-level shifts since this guide was first published reshape the AEO playbook for treatment centers. The seven-layer framework still holds. The context around it has moved.

Google’s March 2025 and December 2025 core updates extended EEAT scrutiny beyond classic YMYL. Healthcare-adjacent content now faces the same authorship, citation, and authority standards that previously applied only to medical and financial pages.

Industry tracking from 2025 shows 73% of top-ranking YMYL pages now display detailed author credentials, up from 58% the year before. Treatment center pages without named clinical authorship are at a structural disadvantage that did not exist 18 months ago.

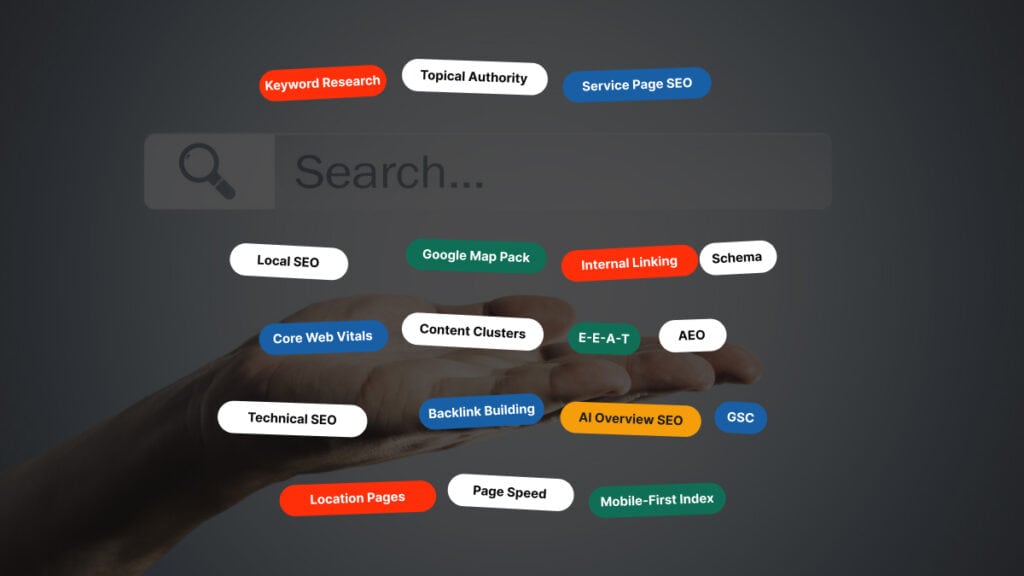

The same architecture work that produces topical authority for organic search now drives AI citation share. See our Topical Authority for Treatment Centers explainer for the architecture layer this depends on.

AI citation share is more concentrated than PageRank ever was. The 5W 2026 AI Platform Citation Source Index found that the top 15 domains capture 68% of all consolidated AI citation share across ChatGPT, Perplexity, Claude, AI Overviews, and Gemini.

That concentration creates a winner-take-most dynamic for treatment centers willing to invest before competitors catch up. The same finding showed citation share can shift within weeks rather than months.

ChatGPT’s Reddit citation share fell from roughly 60% to 10% over a six-week window in late 2025 after a single Google parameter change. Track citation share weekly, not quarterly.

The FDA issued 30 warning letters in March 2026 to substance use treatment providers about advertising claims. Outcome claims in AI-citable content now carry FDA exposure that did not exist 12 months ago.

Treatment centers making any kind of outcome claim in AEO-targeted content should review the claim against current FDA guidance. AI engines verify claims against authoritative sources when generating responses, which means unsourced clinical assertions now produce both a citation penalty and a regulatory risk.

The agencies running real AEO are the ones updating the playbook every quarter. Anyone selling a static AEO checklist is selling 2025 work in a 2026 environment.

The diligence question to add to your partner-evaluation list: what specifically did you change in your AEO methodology last quarter? A specific answer with examples is the right answer. “We are always learning” is not.

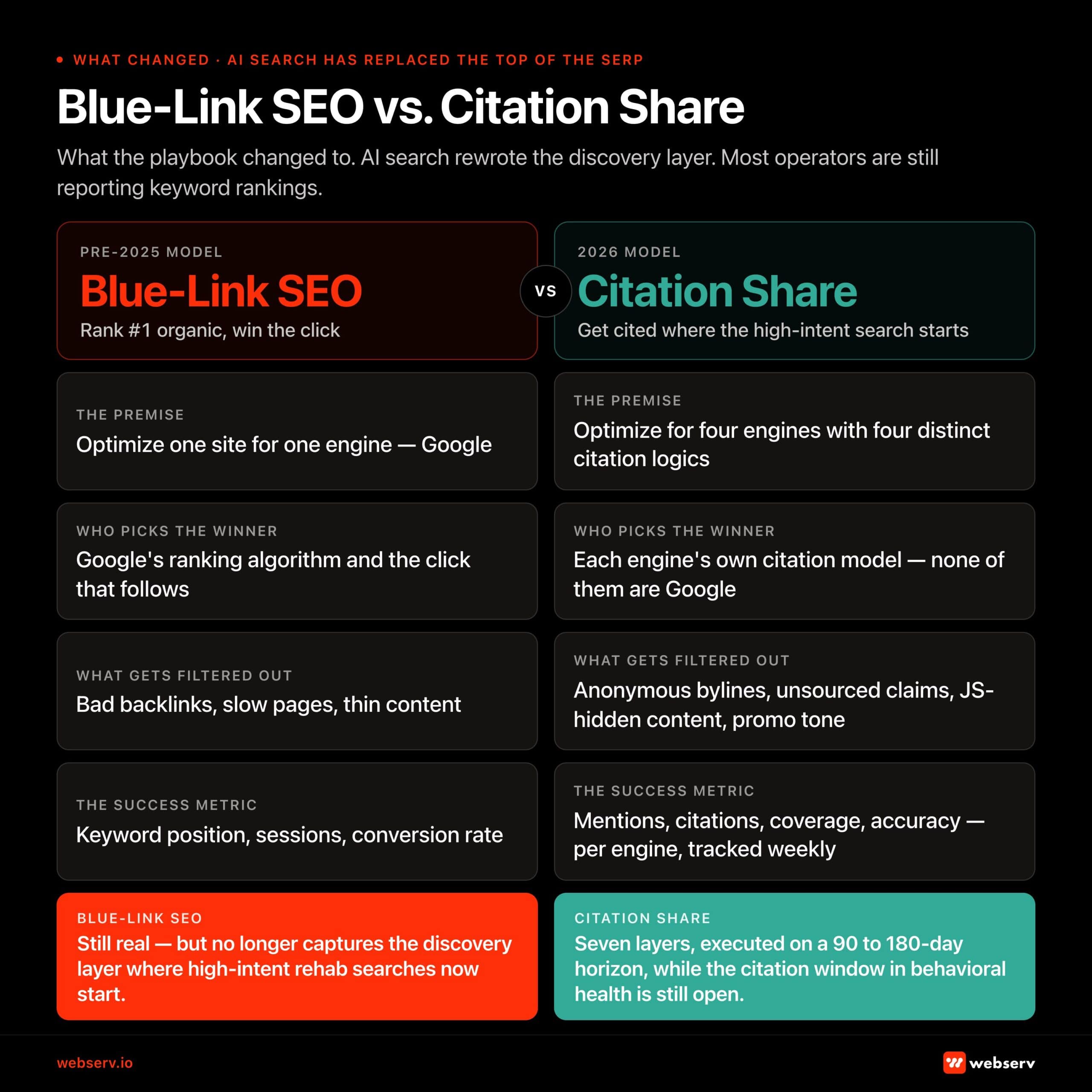

AI search has replaced the top of the SERP for high-intent rehab queries

The discovery layer for treatment center prospects has moved. Google AI Overviews now appear above the classic organic results for many of the head terms treatment centers used to rank for.

ChatGPT, Perplexity, and Claude conversational search are gaining share of high-intent prospects who used to start their journey on Google.

The data backs the shift. AI Overviews pull from the top 10 organic results 78% of the time, but ranking #1 organically does not guarantee a citation. Reddit is the #1 source across every major AI engine, cited at roughly 40% frequency. YouTube is now the most cited domain in AI Overviews and grew 34% in the last six months.

The state of AI source distribution across engines

| AI engine | Sources per answer | Top source preference | Treatment center implication |

|---|---|---|---|

| Google AI Overviews | Multiple, often 5-10 | Top-10 organic results (78% of citations) | Classic ranking still matters as the entry signal |

| ChatGPT (OpenAI) | 2-4 | Wikipedia (47.9%) + high-authority editorial | External authority signals carry disproportionate weight |

| Perplexity | 4-8 | Reddit (46.7%) + forums | Evidence-backed source material gets rewarded directly |

| Claude (Anthropic) | Few, authority-weighted | Named clinical authors + government health agencies | YMYL strictness highest here. Credentialed authorship required |

Treatment centers that built their organic strategy around classic blue-link rankings are increasingly invisible to the prospects starting their search at an AI tool. The high-intent traffic is intercepting earlier in the journey, and the operators showing up in the citations are the ones capturing it.

The flip side: most treatment center content was never built for AI citation. Promotional tone, anonymous authors, thin claims, and dated information are exactly what AI engines exclude.

Operators who invest in clinical-review workflows, named-author bylines, evidence-backed claims, and proper schema have a meaningful window to dominate AI citations while competitors are still catching up.

Why measuring AI visibility is harder than measuring rankings

Treatment center marketing teams that approach AI citations the way they approach traditional keyword rankings are reading a fundamentally different signal incorrectly. AI visibility tooling routinely reports “wins” that do not translate to real user exposure, and the platforms themselves change which queries trigger AI features without warning.

Three patterns we see in audits illustrate the gap between dashboard metrics and reality. These show up alongside the rapid platform-level shifts noted above, which means citation share that looked solid in Q4 may be unreliable in Q1.

Pattern 1: The Semrush “position 1” lie

A center is reported as the top citation in an AI Overview for a high-volume keyword. The actual SERP shows the citation collapsed behind a “load more” button or hidden inside a paperclip-style expand icon.

The brand is technically cited and operationally invisible. The fix is to check every reported citation on the live SERP before reporting it as a win.

Pattern 2: Google pulling back AI Overviews on YMYL queries

A facility was prominently featured above the load-more button on AI Overview citations for “depression treatment Orange County,” with a linked brand mention in the visible portion of the result.

A platform-level decision by Google to pull AI Overviews from that category of healthcare query reduced the visibility of that citation to zero overnight, without any change to the underlying content or rankings.

Pattern 3: AI citations that produce no clicks

A facility had a prominent AI Overview placement for the informational query “Sublocade vs Suboxone.” The query had historically driven steady mid-funnel traffic to their site.

Once the AI Overview answered the question directly inside the SERP, CTR on the featured citation dropped to near zero. The AI was good enough at the answer that users did not need to click through.

The implication: AI visibility cannot be reported the same way ranking visibility was reported. Every claimed citation needs SERP-level verification. Trends matter more than point-in-time snapshots. And citation share is not interchangeable with traffic share, because pages cited prominently can still produce flat or declining click-through.

How each AI engine actually selects sources

The four major AI engines have distinct citation logics. Treatment centers optimizing for one without understanding the others are leaving citations on the table. Per Google’s helpful, reliable, people-first content guidance, the same E-E-A-T evaluation framework that informs classic Google rankings also drives AI Overview source selection.

| Engine | Citation density | Primary source pattern | What gets weighted |

|---|---|---|---|

| Google AI Overviews | Multiple citations, surfaced inline and behind expanders | 78% from top 10 organic; 22% from outside when authority outranks position | Top 30% of page = 55% of citations (vs. 24% middle, 21% bottom). Self-contained 134-167 word answer units are 4.2x more likely to be cited. |

| ChatGPT (OpenAI) | 2-4 sources per answer | Wikipedia (47.9%) + high-authority editorial | For treatment center queries, ChatGPT citations correlate strongly with PR mentions, peer-reviewed citations, and Wikipedia inclusion. |

| Perplexity | 4-8 sources per answer, high link visibility | Reddit (46.7%) + forum discussions | The most “transparent” AI search engine. Citations are part of the user experience, so brands producing evidence-backed source material get rewarded directly. |

| Claude (Anthropic) | Few citations, authority-weighted | Named clinical authors + government health agencies | YMYL strictness highest. Named MD/LCSW/LMFT/PhD authors, peer-reviewed citations, and NIDA/SAMHSA/CDC references get disproportionate weight. |

The cross-cutting pattern: content updated within the past 12 months earns 3.2x more citations than content older than 24 months. Stale content loses citation share fast.

The AI engines also fan out from the original query into related sub-questions during retrieval, pulling sources for each branch. A page that earns the answer to one query can be cited across the entire fan-out tree if the related-question coverage is built into the same content.

Building for the fan-out, rather than for a single query, is the depth move most operators have not made yet.

Why behavioral health is a special case under YMYL

YMYL (Your Money or Your Life) designation puts treatment center content under the strictest AI quality scrutiny. The AI engines weight credentialed authorship, evidence sourcing, and authority signals more aggressively for healthcare queries than they do for unregulated categories.

The Google March 2025 update extended YMYL-style scrutiny to broader healthcare adjacencies. The bar for behavioral health content tightened in the last 12 months even where the underlying classification did not change.

Three implications for treatment center content.

| Implication | What the AI engines do | What the operator has to ship |

|---|---|---|

| Named clinical authorship is essentially required | Pages without a named clinician or with “admin” as the author are structurally disadvantaged in AI citation selection. | Every clinical page gets a named MD, LCSW, LMFT, PhD, or equivalent byline with a verifiable credential signal and a live author profile page. |

| Every factual claim needs an external citation | AI engines verify claims against authoritative sources when generating responses. Unsourced clinical assertions get weighted lower or excluded entirely. Content that contradicts authoritative bodies gets actively ignored or contradicted. | Link to NIDA, SAMHSA, CDC, or peer-reviewed PubMed citations for every clinical claim. Don’t assert without sourcing. |

| Promotional tone gets filtered out | AI engines are tuned to recognize sales-oriented content (“compassionate care,” “experienced team,” “healing environment”) and weight it lower for informational queries. | Write like a clinical reference document with operator perspective layered on top, not like a brochure. |

The combined effect: most treatment center content currently published is structurally disadvantaged for AI citation. The operators investing in clinical-review workflows, evidence-backed sourcing, and editorial discipline have a meaningful window before the rest of the category catches up.

What real AI optimization actually involves

If your marketing company tells you they are doing AEO and the answer is “we set up an llms.txt file,” they are still operating off flawed theories from six months ago.

The seven layers below build on the foundational SEO work covered in our Top 25 SEO Agencies for behavioral health ranking. Operators auditing their current agency for AEO readiness should also assess whether the agency has executed against those core SEO basics first; AEO compounds on top of solid SEO, not in place of it.

Evidence shows that LLM-specific bots, including GPTBot and PerplexityBot, often do not request or access llms.txt files at all. It became a popular pitch in mid-2025 because it sounded technical and easy to implement. It does not move citation share in measurable ways.

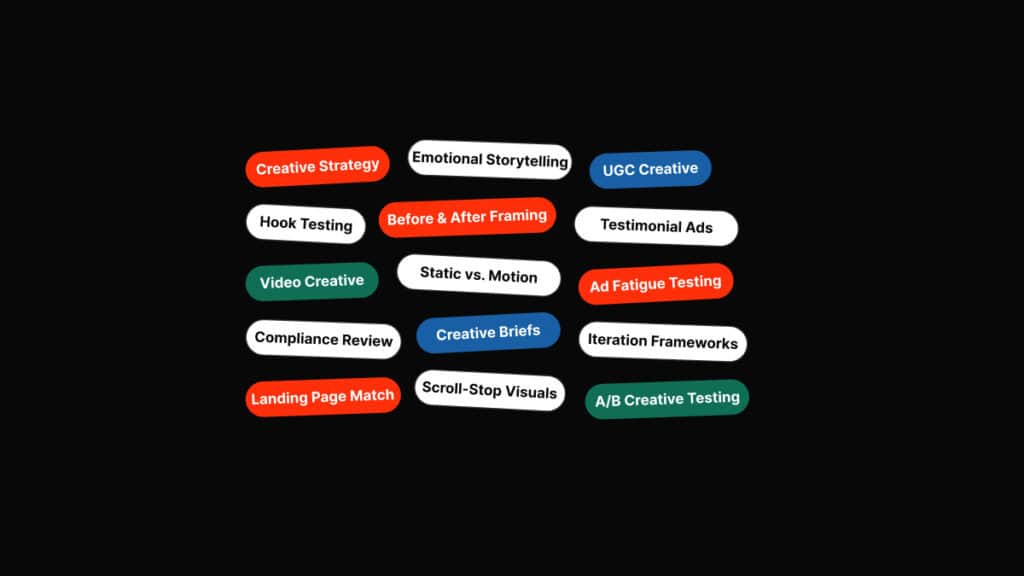

Real AI optimization for treatment center content involves seven layers operating together.

| Layer | What it is | What it produces |

|---|---|---|

| Layer 1: Sentence structure | Clear declarative sentences with explicit subjects and unambiguous claims. AI engines parse content as semantic triples (subject, predicate, object). | Citations on the specific factual claims on the page. State a fact, back it with evidence. |

| Layer 2: Code structure | Server-rendered HTML, semantic HTML5 elements, content visible in the default page render. The exact pattern from our mobile-first indexing guide. | AI crawler accessibility. Anything hidden behind JS execution, accordions, popups, or load-more is at risk of being missed. |

| Layer 3: Digital PR | External authoritative sources citing the treatment center: healthcare publications, addiction-focused journals, recovery-community sites, credentialed expert features. | The entity layer AI engines use to weight citations. Same authority signal that helps classic SEO, weighted heavier for YMYL. |

| Layer 4: Video production | YouTube content that mentions the treatment center, services, or methodology. Includes brand-owned channels AND third-party interviews/podcasts/educational content. | AI Overview citations. YouTube is now the most cited domain in AI Overviews and grew 34% in six months. |

| Layer 5: Social media presence | Organic mentions of the brand inside relevant subreddits (recovery, addiction, mental health) and other forums. Real users talking about the facility in real recovery conversations. | Reddit citations: the single largest source across every major AI engine. Cannot be faked without violating policies but the underlying signal is achievable through community management. |

| Layer 6: Google reviews | Volume, recency, and content of Google reviews. Reviews are scanned for sentiment, services mentioned, and outcomes referenced. | Both an authority signal and a content source for AI engines. 200 detailed reviews mentioning specific clinical programs outperforms 30 generic reviews. |

| Layer 7: Site structure and internal linking | Clear topical clusters with strong internal linking. AI crawlers use the link graph to understand which pages are central to a site’s expertise. | Authority concentration on the pages you want cited. Orphan pages are weighted lower regardless of individual quality. |

These seven layers compound. Treatment centers that invest in three or four of them and skip the rest see citation lifts that plateau quickly. Operators who address all seven in parallel see compounding citation share over a 6 to 12 month horizon. The site-structure layer specifically depends on the foundation from the technical SEO fix list and the topical-cluster mapping from the keyword strategy framework.

That site-architecture work is the same foundation as our Topical Authority for Treatment Centers framework, and the digital PR layer compounds with the 7 Digital PR Tactics for Rehab Centers playbook.

How SoCal Sunrise generated 85 admissions and 2,297% ROI from SEO in 6 months

A ground-up SEO rebuild using the Pathfinder Parents Methodology turned an invisible online presence into a top-ranking admissions engine.

Read the case study →85 admits and 3,152 leads attributed to organic

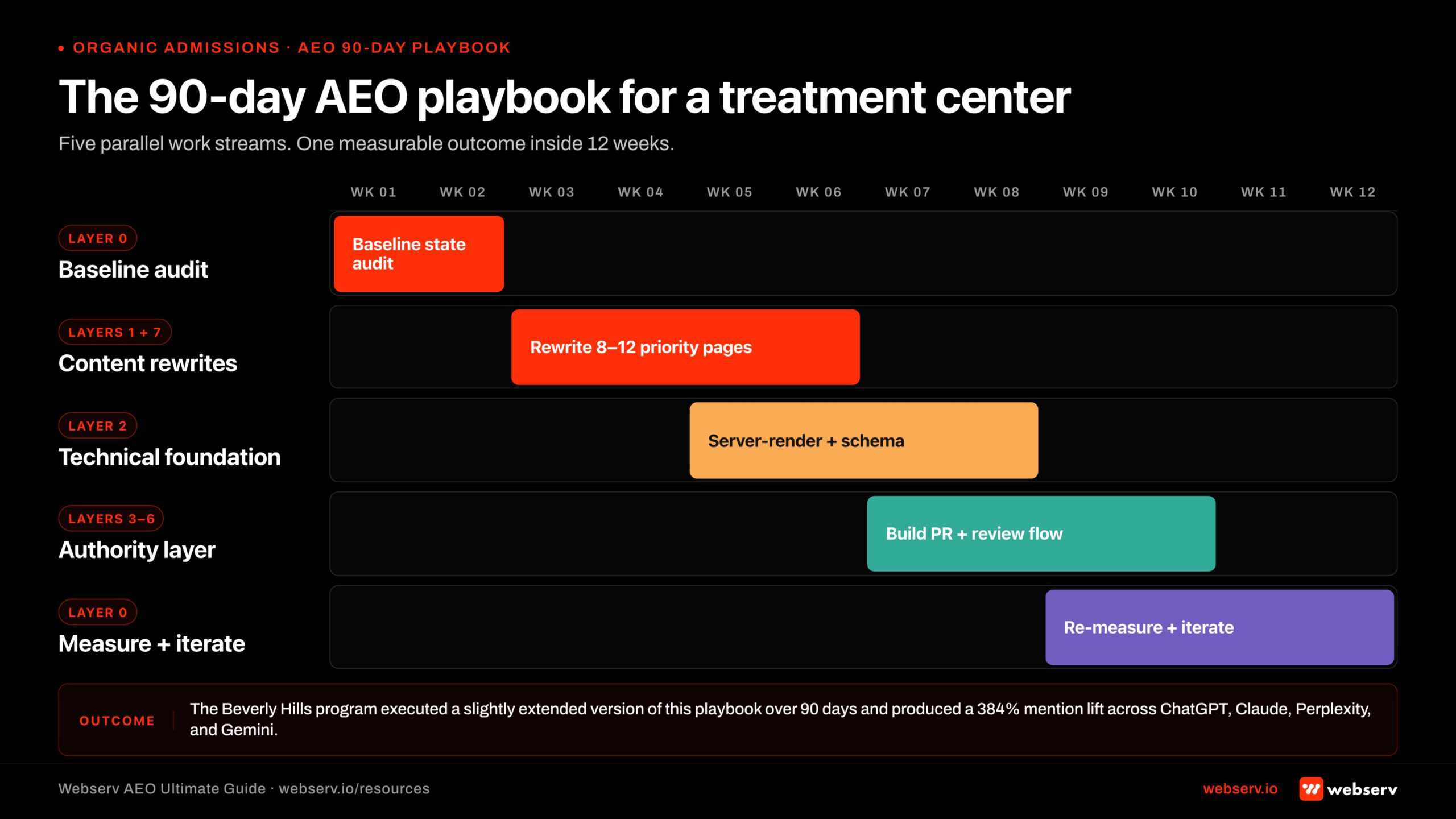

Case study: a residential treatment center in California

A residential treatment program we work with in the Beverly Hills area gives a representative example of what citation lift looks like when the full playbook is run.

The engagement started in October 2025 with a full AI and LLM technical audit. The objective was to increase brand visibility in AI-generated responses across the major platforms (ChatGPT, Claude, Perplexity, Google Gemini, Bing Copilot), improve citation rates in LLM outputs, optimize 25 high-priority pages for AEO best practices, and establish the program as an authoritative source in addiction treatment.

Phase 1 (November 2025)

Ten foundational educational pages were rewritten to citation-worthy structure: a path-to-detoxification explainer, alcohol treatment overview, signs of opioid abuse, fentanyl lethality content, an integrative-treatment effectiveness piece, how to get someone into rehab, the genetics of addiction, Xanax addictiveness, and yoga in recovery contexts.

Each page was restructured with a direct answer in the first 134 to 167 words, named clinical authorship, external citations to authoritative bodies, FAQPage and Article schema, and clean technical foundation.

Phase 2 (January 2026)

Fifteen service-level and treatment-modality pages followed the same playbook: insurance verification page, the parent what-we-treat hub, individual substance pages (opioid, cocaine, benzodiazepine, dual-diagnosis, meth, heroin, stimulants, prescription drugs, marijuana, fentanyl), therapy modality pages (CBT, DBT), and a Beverly Hills location residential page.

Results across the platforms by mid-January 2026

Brand mentions in actual AI responses increased 384% over the baseline window. The program is now cited 4.8x more often than at the start of the engagement.

| Platform | Citation lift pattern |

|---|---|

| ChatGPT | Increased mention frequency in treatment center recommendations. Now cited in responses about Beverly Hills addiction treatment specifically. |

| Google Gemini | Enhanced presence in local treatment queries. Better representation in insurance-related questions. |

| Claude | Improved source attribution for integrative treatment approaches. Featured in detailed treatment methodology queries. |

| Perplexity | Higher ranking in source citations for detox information. Increased visibility in comparative treatment searches. |

One nuance worth flagging: the “AI Visibility Score” reported by the tracking tool actually decreased slightly during the same window. The score is a composite metric that weights ranking position and citation prominence.

Mentions, the metric that drives real traffic, increased 384%. This is the gap between dashboard metrics and reality. Operators should weight actual mentions in AI responses heavier than composite visibility scores when measuring AEO progress.

The 90-day playbook to start getting cited

A treatment center that runs a deliberate AEO program should see measurable citation lift within a quarter. The sequence below is what we use with new clients.

| Window | Work | Output |

|---|---|---|

| Weeks 1-2: Baseline audit | Use OtterlyAI, Siftly, HubSpot AEO, or Trakkr to baseline current citation share across ChatGPT, Perplexity, Claude, and Gemini. Run manual SERP checks on the top 30 commercial and informational queries. | Documented starting state, competitor citation map, and priority query list. |

| Weeks 3-6: Content layer rewrites | Identify 8-12 high-priority pages where the center has existing authority but weak AEO structure. Rewrite each with a direct answer in the first 134-167 words, named clinical author bylines, external citations to NIDA/SAMHSA/CDC, FAQPage and Article schema, and clean H2/H3 hierarchy. | Citation-worthy content layer across the priority page set. Matches Layer 1 (sentence structure) and Layer 7 (site structure). |

| Weeks 5-8: Technical foundation | Audit and remediate against the AI crawler accessibility standard. Server-render primary content. Add MedicalOrganization and Physician schema. Verify semantic HTML5 elements. Confirm mobile-first indexing readiness. | AI crawler accessibility lifted to 2026 standard. Matches Layer 2 (code structure). |

| Weeks 7-10: Authority layer | Begin or accelerate digital PR. Identify 3-5 healthcare publications and addiction-focused outlets for named expert features. Coordinate 1-2 podcast appearances or video interviews per month for clinical leadership. Systematic Google review acquisition with prompts encouraging specific service mentions. | External authority citations begin compounding. Matches Layers 3-6 of the seven-layer model. |

| Weeks 9-12: Measure and iterate | Re-run the citation share audit. Compare baseline to current state across all four major AI engines. Identify which content patterns produced the largest lift, double down on those. Diagnose underperforming patterns (often: missing first-134-word direct answer, missing named author, no external citations). | First measurable citation lift across platforms. Playbook calibrated to what is working in the specific market. |

The center we worked with executed a slightly extended version of this playbook over 90 days and produced the 384% mention lift cited above. The pattern is replicable. The execution discipline is the variable.

Common AEO mistakes treatment centers make

Five mistakes account for most of the audit findings we surface when reviewing a treatment center’s AEO posture.

| Mistake | Why it fails | Fix |

|---|---|---|

| 1. Trusting dashboard metrics without checking live SERPs | AI tracking tools report citations that do not match user experience. Buried citations behind load-more or paperclip expanders count as “position 1” in the dashboard and as invisible in reality. | Mandatory SERP-level verification for every claimed citation before it gets reported as a win. |

| 2. llms.txt obsession | Agencies pitch llms.txt as the AEO solution. Evidence indicates GPTBot, PerplexityBot, and the major AI crawlers do not consistently fetch llms.txt files. The work is performative. | Real AI optimization happens at the content, code, and authority layers, not in a single text file. Put the time into the seven layers above. |

| 3. Anonymous or promotional content | Pages without named clinical authorship or with marketing-brochure tone (“compassionate care,” “our experienced team”) are excluded from AI citations for healthcare queries. | Clinical-review workflow with named bylines. Editorial standard that prioritizes evidence and clarity over sales tone. |

| 4. Thin or unsourced clinical claims | Pages that assert clinical facts without external citation get weighted lower or contradicted by AI in responses. | Rigorous source linking to NIDA, SAMHSA, CDC, or peer-reviewed PubMed citations for every clinical assertion. |

| 5. Treating AEO like traditional SEO | The layers look like SEO and operate differently. Cross-platform citation logic, YMYL strictness, and weekly platform-level changes require an adaptive playbook that classic SEO did not. Operators working off mid-2025 AEO checklists are working with stale playbooks. | Operate with a partner who monitors platform changes weekly and adjusts the playbook accordingly. Operators working off mid-2025 AEO checklists are working with stale playbooks. |

The fix for all five is the same posture: AEO is a continuously moving target. The marketing partner has to be set up to monitor the changes weekly and adjust the playbook accordingly.

How to measure AI citation share of voice

Citation share is the right metric to optimize against. The tools that measure it have matured fast in the last year.

Primary tools

OtterlyAI, Siftly, HubSpot AEO, and Trakkr each track citation share across ChatGPT, Perplexity, Claude, and Google AI Overviews. They surface per-query citation data, competitor share, and trend lines week over week.

Each platform has strengths: OtterlyAI for cross-platform tracking, Siftly for competitive benchmarking, HubSpot AEO for the prompt-level breakdown of where competitors are outranking you.

The four metrics that matter

| Metric | What it measures | Why it matters |

|---|---|---|

| Mentions | Raw brand-name appearances in AI responses | The most direct measure of brand visibility. The metric the Beverly Hills case study moved 384%. |

| Citations | Linked references to your website content | Higher commercial value because they drive traffic. |

| Coverage | Percentage of AI responses on relevant queries that draw from your owned content | Measures depth of authority within the priority query set. |

| Accuracy | Factual fidelity of AI representations of your brand | Catches misattribution and incorrect claims before they compound. |

The share-of-voice formula

(Your brand mentions / total market mentions in the query set) × 100. Position-weighted versions of this formula (where mentions in the visible portion of an AI Overview count more than mentions buried in expanders) are more accurate but harder to produce at scale.

Baseline benchmarks for behavioral health

| AEO investment level | Typical share of voice |

|---|---|

| Centers that have not invested in AEO | 0-5% share of voice across target query set |

| Centers that have run a 90-day AEO playbook | 15-30% share of voice |

| Category leaders for AI citations in addiction treatment | 35-50% share of voice across priority query sets |

Measuring this monthly and reporting it as a primary marketing KPI keeps the team accountable to a metric that reflects real discovery-layer presence rather than the legacy ranking metrics that no longer capture the full picture.

What success looks like at twelve weeks

A treatment center that runs the AEO playbook with discipline should see measurable change inside a quarter.

| Window | Milestones |

|---|---|

| Weeks 1-4: Foundation work | Baseline audit complete. Top 8-12 content pages rewritten to citation-worthy structure. Schema markup audit and remediation underway. Named clinical authorship implemented across the priority content set. |

| Weeks 5-8: Authority layer activation | Technical foundation remediation complete. Digital PR pipeline producing first external citations. Google review acquisition systematic. First measurable citation lift visible in tracking tools. |

| Weeks 9-12: Citation lift compounding | Brand mentions across ChatGPT, Perplexity, Claude, and Gemini up 100-400% from baseline depending on starting state and execution rigor. Share of voice rising on priority query sets. First measurable referral traffic from AI search visible in analytics (typically 5-15% of total organic by end of week 12). |

The Beverly Hills program case study above represents the upper end of what is achievable inside three months. Less mature programs starting from a thinner content base produce smaller citation lifts on the same timeline but lay the foundation for compounding gains in months 4 to 12.

The compounding nature is the key takeaway. AEO is not a one-time fix. Each new citation makes the next citation more likely (AI engines weight prior citations as authority signals for future selection). Each piece of citation-worthy content makes the broader site more discoverable. The 90-day playbook is the foundation. The 12-month execution discipline is what builds the moat.

What to ask your SEO or AEO partner this week

Three questions surface whether your partner is operating on the 2026 AEO standard or working from a 2024 playbook.

First, ask for a manual SERP audit of your top 10 commercial queries. The partner should be able to show you, on a live SERP, which AI Overview features appear, which sources are cited, and where your brand appears (or does not).

They should also be able to show whether the citation is in the visible portion of the AI feature or hidden behind a load-more or paperclip expander. A vague answer that references dashboard reports without SERP-level verification is the first warning sign.

Second, ask what their AEO playbook is. If the answer is “we set up an llms.txt file,” they are working off a deprecated theory.

The right answer references the seven layers above (sentence structure, code structure, digital PR, video, social, reviews, site structure) and an editorial workflow for clinical-review content with named bylines and external citations.

Third, ask for the citation share trend over the last 90 days across ChatGPT, Perplexity, Claude, and Google AI Overviews.

The right answer includes a specific number, a competitor comparison, and a list of which queries produced the largest lift. The wrong answer is “we are working on it” or “AEO tracking is hard.”

AI search is the new front page of Google for treatment center queries. The discovery layer has moved.

Treatment centers showing up in the citations are capturing the high-intent traffic that used to flow to blue-link rankings. The companion patterns that drive local AI discovery and map-pack-to-AI overlap compound on top of the seven-layer foundation above.

Operators evaluating where to start should read it alongside our technical SEO foundations guide and the keyword strategy framework for rehab and mental health providers. The AEO playbook only works on top of a clean technical foundation and a targeted keyword set.

Treatment centers that are not investing are losing share on a metric most of their marketing teams are still not measuring. If you want help evaluating which agencies actually do this work, our Top 20 AI Optimization Agencies for Rehab listicle is the working shortlist.

The full capability and engagement model lives on our AEO services page.

The window to dominate AI citations in behavioral health is open right now and closing. The fix is the seven-layer playbook above, executed with discipline over a 90 to 180 day horizon. The cost of waiting is invisibility in the discovery layer where the high-intent searches are increasingly starting. Book an intro call and we will run the citation-share audit on your priority query set as part of the diligence.

Frequently asked questions about AI citation for treatment centers

Is AEO the same as SEO or something different?

AEO (Answer Engine Optimization) shares a foundation with SEO but optimizes for different surfaces. SEO targets the ranked list of organic results. AEO targets citations and references inside AI-generated answers from Google AI Overviews, ChatGPT, Perplexity, and similar engines. The content disciplines overlap, but the success signals are different.

Practically, a facility cited in 30 percent of relevant AI Overviews but ranked sixth in organic is in a stronger position than one ranked second organically with zero AI citations. The first touchpoint for most high-intent patients is now an AI answer, and the AI either mentions your facility or it does not.

For most behavioral health programs, the right approach is SEO and AEO running together. AEO does not replace organic ranking work, but it shifts the priority of certain content formats (structured Q&A, citable expert content, schema-bearing pages) toward the formats AI engines prefer.

Can the same content work for ChatGPT, Perplexity, and Google AI Overviews?

The foundational content shape is the same across engines: direct, structured, citation-quality answers from authoritative sources with named expertise. But each engine selects sources differently. Perplexity weights real-time web crawl heavily. ChatGPT relies on training-data plus selective live retrieval. Google AI Overviews leans on its existing ranking signals plus E-E-A-T markers.

That means well-built AEO content tends to perform across all three engines, but optimization for each requires slightly different tactical work. Perplexity rewards fresh, dated content updates. ChatGPT rewards canonical authority pages that show up in many crawled sources. Google AI Overviews rewards Schema.org markup and credentialed author signals.

We work the foundation first (citable, structured, authoritative content) and then add engine-specific tactics where the data shows your facility is underrepresented in a specific surface. Trying to optimize for one engine in isolation usually leaves value on the table elsewhere.

Does AI Overview citation actually drive admissions?

Yes, with lag. AI Overview citations rarely produce immediate admit volume the way Google Ads does. They produce three downstream effects: families discover your facility name during research, brand recall builds in the AI engines that families return to, and direct branded searches increase over months.

For treatment centers that have measured this carefully, AI Overview citation translates to direct-branded-search lift in 60 to 90 days and admit attribution lift in 4 to 6 months. The pattern is similar to how PR coverage influences purchase decisions: not the immediate driver, but a meaningful upstream layer.

The facilities that ignore AEO are betting that organic rankings alone will continue producing admits at current cost-per-admit levels. That bet looked safe in 2023. It looks increasingly questionable in 2026.

Does AEO matter if our facility is in a small or regional market?

Yes. AI engines do not gate by market size the way local search does. A facility in a small regional market with strong AEO discipline can be cited as the answer for regional queries while larger competitors in the same region are absent. The opportunity is often bigger in smaller markets because fewer competitors are optimizing for it.

The case study we walk through in this guide is a residential program in a mid-size Western market that went from zero AI citations to 30 percent share-of-voice on its top 10 target queries in 90 days. Smaller markets compound faster precisely because the competitive baseline is lower.

The work transfers across market sizes. The same content discipline, schema implementation, and citation strategy produces results whether you operate in Los Angeles, Phoenix, or Spokane.

How long until AEO work shows up in AI Overview citations?

Most facilities see their first AI Overview citations within 30 to 60 days of foundational AEO work (schema deployment, structured Q&A publishing, expert authority pages). Measurable share-of-voice on target queries typically builds between months 3 and 6. Compounding citation depth and reduced brand-search dependency usually become defensible in months 6 to 12.

The timeline depends on starting authority. Facilities with strong existing organic visibility see AI citations faster because AI engines weight existing trust signals. Facilities starting from zero authority need a foundational quarter of E-E-A-T work before AI citation reliably picks up.

We treat the first 90 days as proof-of-mechanism work, citations exist and the methodology is working, and the next 6 to 12 months as the period where the citation depth becomes a real competitive moat.

Most in-house teams hit a wall not because they lack knowledge, but because they lack bandwidth.

When you are ready to hand it off, Webserv has spent 9 years executing exactly this for treatment centers nationwide.

Trevor Gage is the Director of Earned & Owned Media at Webserv. Webserv works with behavioral health and addiction treatment centers on SEO, paid media, and full-funnel admissions strategy.